Trapped in the Iron Cage: The One-Dimensional Researcher

You can also find the original Korean version of this article at: https://behindsciences.kaist.ac.kr/2019/12/23/

“12 points, 8, 15 but as a second author, so roughly 10 points. Wow, he published here? Another 10 points. What’s this one? Don’t know, pass. Oh yeah, people are definitely getting publishing here a lot. 3, 3, 7, ..."2

During my undergraduate years majoring in Materials Science and Engineering, I wasn't certain about pursuing graduate school, so I decided to gain some research experience first. My academic advisor introduced me to a senior student in his lab, suggesting I come by and learn about experiments. However, on my very first day, what the senior taught me wasn't experimental techniques, but rather how to read a researcher's CV (curriculum vitae). We were in the middle of discussing which lab I might consider for my graduate program. He scanned the publication list of a newly appointed professor in the department and began calculating points as if grading an exam paper. After repeating this a few times with different professors’ lists, he recommended a “good” one to me. Although bewildered at first, I found it quite impressive, thinking, “So this must be how researchers evaluate and recognize one another. Cool!”.

It wasn’t until around the time I finished my undergraduate degree that I realized the numbers the senior student had been reciting were Journal Impact Factors3. Whenever I spoke with friends who had already started graduate school, the conversation invariably touched upon publishing papers, and the Impact Factor was always a central theme. Even on social media, it wasn’t uncommon to see posts boasting about where someone had published a paper, followed by numerous congratulatory comments. Interestingly, the actual content and process of the research itself rarely became the main topic of conversation.

I ended up in graduate school too, even though I changed my major to social sciences. Had my seniors emphasized it that much, or did I just take it too seriously? Before I could even finish my first semester, thoughts of “I need to publish papers quickly” began filling my mind. And so, as a new master’s student who hadn’t even determined my own research topic yet, I lived by the maxim “Publish or Perish.” Before long, I found myself considering what and how to research in order to publish numerous papers in high impact factor journals as quickly as possible.

My strategy was reasonable enough. I examined which journals had published the papers listed in my advisor’s course syllabus. I selected the journals with the highest impact factors, investigated what kind of research topics had been published in recent years, and how frequently those papers were cited. After becoming familiar with academic search engines like Scopus, Web of Science, and Google Scholar, and after skimming through hundreds of abstracts without reading most of the actual papers, it became abundantly clear that I needed to research academia-industry collaboration. And that’s how I determined my research topic.

Now I found myself drawn to the internet communities like hibrain.net (Korean website for academic job network), where university faculty job vacancies are posted. These sites shared information about how many publication points a PhD researcher needs to meet requirements for a new faculty position, and how many points count for papers published in KCI (Korea Citation Index) and SCI indexed journals4. There were plenty of “insider tips” too, like how combining several published papers into a book would count both the papers and the book as separate achievements, but writing a book first would prevent you from publishing the same content as papers, so it was better to delay writing a book. While I was preoccupied with figuring out survival strategies in academia, I heard news that my graduate school was hiring a new professor. As soon as I learned the names of the finalists, I found myself, just like that senior student from my undergraduate days, scrutinizing the number of publications from each candidate, where they were published, whether the journals were indexed in SCI, and their impact factors. Of course, I never actually read the papers.

Accelerated academy and publication as academic currency

I imagine academia filled with like-minded individuals. Every researcher evaluates others based solely on their CVs with a long list of publications and impact factors of the journals where they have published. Researchers desperately try to publish as many papers as possible in journals that are indexed in SCI or Scopus with higher impact factors. Research institutions pursue the same goals. Academic societies likewise do everything they can to get their journals included in indexing databases and to increase their impact factors. And so academia marches relentlessly toward more papers, more indexed journals, and higher impact factors5.

This isn’t merely a hypothetical scenario. Ugo Bardi, an Italian physical chemist, has pointed out that the “publish-or-perish” atmosphere, combined with a permissive peer review system that functions as a lax gatekeeper, is pushing academia toward mass production of papers. He argues that this situation, where researchers are forced to write too many papers, paradoxically demonstrates the decline of scholarship6. According to Bardi, scientific papers in today’s research system share similar characteristics with currency in the modern economy (see Table 1). Furthermore, he draws a stark comparison: academia’s fixation on paper quantity is as fundamentally unsustainable as economic systems that deplete natural resources in pursuit of endless consumption and profit maximization.

Table 1: What Currency and Papers Have in Common7

| Emitting currency. Just as central banks issue currency, papers are only given value through the citation indexing services. |

| Spending your currency. A paper has no value in itself. It is only exchangeable like currency when you get a research grant or a job. |

| Inflation. Currency and papers gradually lose value. Older papers are less valuable, so individual researchers have to keep writing new papers. |

| Interest on currency. Just as money in the bank earns interest, researchers submit a list of their publications to funding organizations to receive funding and generate more research output. |

| Assaying. Just as coins and banknotes are issued after being verified by an authorized organization, papers are also verified through a process called peer review before being distributed. |

| Counterfeiting. Bad journals are published without verification, just like counterfeit bills. |

| Bad money replaces the good. Just as bad money drives out good money in economics (Gresham’s Law), papers are often valued by their quantity rather than quality, resulting in a proliferation of low-quality publications. |

| Ponzi schemes and multi-level marketing. A business model that travels across time and place is also strategically utilized by many bogus journals. |

Academia isn’t solely focused on maximizing paper counts. Extending Ugo Bardi’s idea, we can interpret that papers have different “exchange rates” based on the journals they appear in and those journals’ impact factors. Depending on how frequently a paper is cited, it might experience a slower rate of “inflation” or even undergo “deflation” in some cases. Other types of academic “currency” also exist, such as patents, technology transfers, and research grants. All these currency values combine to determine the worth of individual researchers, and further extend to ranking universities or research institutes, and even entire countries. It’s similar to how companies are ranked by revenue or market value, and nations by GDP or economic growth rates.

There’s no upper limit to the performance metrics that determine a researcher’s worth, but research funding and job opportunities are finite, even scarce. Competition is therefore inevitable. Within academia’s race to maximize performance indicators, there are researchers who simultaneously pursue multiple research projects to maintain a continuous stream of publications. They experience an acceleration not just in the growing number of papers, but in every aspect of academic life. A research group led by Czech theoretical sociologist Filip Vostal has termed this phenomenon the “Accelerated Academy” and organizes academic seminars under the same name8. This acceleration refers not only to the increased rate at which researchers publish, but also to the intensified time pressure they experience. Real-world researchers cannot devote themselves exclusively to conducting research as their time is limited. Meanwhile, performance standards continually rise on all fronts: for continuing research, gaining recognition in one’s field, and achieving personal satisfaction. As a result, time becomes an ever more precious commodity.

Paradoxically, it’s the early-career researchers who both drive academia’s acceleration and become its casualties. Unlike their senior colleagues who have already established their positions, these newcomers frequently find themselves eliminated from the race despite publishing significant numbers of papers. As a result, endless pressure, anxiety, and stress are their daily reality. Indeed, recent studies have consistently revealed serious mental health concerns among doctoral students and early-career researchers9.

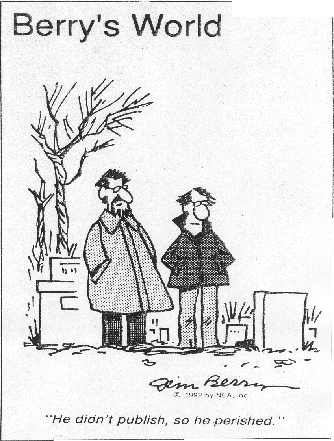

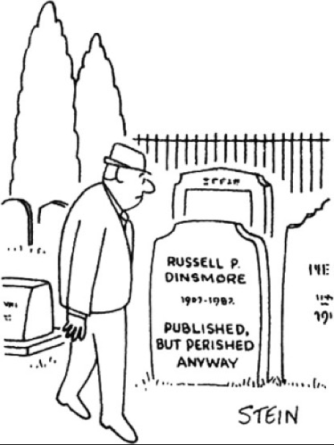

Figure 1: ‘If you don't publish, you will be rejected’ for senior researchers (left) and ‘If you publish, you will be rejected anyway’ for junior researchers (right)10

In response to these desperate pleas, some emphasize the need for advising from supervisors and senior researchers, as well as systematic mental health services11. But can these measures do more than merely alleviate the immediate symptoms? I once found myself confiding in my advisor when I couldn’t bear the stress and anxiety anymore. In the back of my mind, I was also frustrated with our department’s curriculum, which focused more on critical reading seminars than on paper writing. While I complained, my professor listened quietly before dismissing me with a single sentence: “Good researchers eventually find good positions anyway.” That was something she could say because she was already a professor. But it was also perhaps the best thing she could say in her position.

The classes I took in other departments, almost as if escaping, were uniformly practical. They covered everything: choosing research topics, examining relevant journals, finding resources for the latest research methodologies, understanding journal editors’ preferences, and writing papers. Every aspect of these courses was aligned with efficient paper publication strategies. Just attending these classes brought me a sense of relief.

One professor scheduled time with students individually during the midterm period to read and provide feedback on their research proposals. I was grateful that this professor, who had consistently published in so-called “top journals,” showed such dedication even to students from other departments. At the time, I was deeply interested in university entrepreneurship policies and told the professor I wanted to conduct case analyses and interviews to develop a theory explaining necessity-driven entrepreneurship, a topic rarely addressed in previous studies. The professor immediately waved dismissively and said, “No, no. That kind of research is for established scholars. It would be difficult to publish in top journals with that approach.” It struck me then: while I had strategically chosen my research field, why had I overlooked the fact that research content including topics and methodologies also needed to be selected strategically?

Peer review and Researcher Identity Constructed by Performance Metrics

In fact, academia has always functioned as both a financial system and a competitive society. What makes the discussions by Bardi, Vostal, and the “Accelerated Academy” research group novel is their focus on how academia’s operation as them has changed by time. Traditionally, the currency circulating in academia was recognition. Researchers were concerned more with authorship than with paper counts. Beyond copyright issues, plagiarism was considered a violation of research ethics precisely because it attempted to steal recognition from others. Researchers accumulated recognition to build reputation, secure positions, and obtain research funding.

Academia recognizes only firsts. The famous dispute between Newton and Leibniz over who first developed calculus is a well-known example. This explains why many researchers still worry about being “scooped”12, having their ongoing research published by someone else first. In a world that only remembers the first, fierce competition is inevitable. Academia has always been a competitive society where recognition is the prize.

Recognition stems from interaction. To recognize someone, you must either directly understand their research or at least make your own judgment based on evaluations from others in the same field. Einstein is praised as a great scientist not simply because of his 300 publications in prestigious journals and citations; rather, it was the acknowledgement of other scientists who actually read and evaluated his work. In a research system based on recognition, researchers’ self-identities were formed within a network where peers continuously assessed and acknowledged each other’s work.

Today, however, researchers’ self-identities are constructed by performance metrics. Their CVs display endless lists of publications rather than a description or the significance of the research done. Simultaneously, genuine peer review culture is vanishing from academia. There’s no need to spare precious time to scrutinize what other researchers are studying, whether their methods are robust or results are valid, and if there are any implications. Just after two or more anonymous reviewers approve, we consider it deserves publication. Papers in journals indexed in citation databases like SCI seem even more trustworthy. Researchers have effectively outsourced their role of evaluation, with citation indexing services and performance metrics filling the void. Without these, academia as we know it could no longer function because the research system has transformed into a financial system and competitive society where higher performance indicators replace genuine recognition13.

Performance metrics have established themselves as the new operating principles of academia through two main legitimation mechanisms. First, they allegedly allowed for productive and efficient management of research activities at various scales. Second, compared to recognition-based evaluation with its unclear criteria and foundations, metrics were perceived as rational and objective, thus becoming the standard measure for evaluating both researchers and their work.

However, as already pointed out, the first mechanism has actually made academia unsustainable rather than improving it. It’s also well documented that the supposed increases in productivity and efficiency resulting from the management of metrics are merely an illusion14.

The second mechanism also deserves careful scrutiny regarding what rationality and objectivity truly mean in research evaluation. American sociologist Michèle Lamont’s observations of peer review sessions revealed not a simple measurement of academic excellence, but rather a complex deliberative process15. In these settings, researchers exchanged viewpoints across various criteria - originality, methodology, feasibility - and continuously negotiated both the meaning and relative importance of each criterion. Subjects to be evaluated were often interpreted differently, sometimes leading to clashes between individual or disciplinary values. In short, evaluation results emerged only after a complex process of deliberation and consensus-building.

Performance metrics, however, lack this deliberative process. There’s insufficient consideration of what characteristics these metrics possess, what values they incorporate or exclude. Lamont questions whether performance indicators can truly be rational and objective, or to put it differently, whether rationality and objectivity should be the primary values when determining research evaluation methods. This leads us to reflect on what advantages performance metrics offer over researchers who strive for rational and objective evaluations, despite recognizing their own limitations? Are we perhaps mistaking metrics for rationality and objectivity simply because they are quantitative?

Escaping from Being the One-dimensional Researcher in the Iron Cage

After a year of persistent investigative reporting by Newstapa, the issue of fraudulent academic activities became a major controversy both within and outside academia16. Meaningless sham conferences where anyone could take the podium and say anything as long as they paid a registration fee; predatory journals that would publish virtually any article regardless of quality if publication fees were paid; and researchers who used research funds for these activities and then submitted the resulting publications as legitimate achievements - all provoked significant public outrage. Korean government identified and disciplined researchers who had attended the conferences mentioned in the reports17. The impact was so significant that even a nominee for Minister of Science, Technology, and ICT was forced to withdraw due to controversy over his attendance at a predatory conference.

The report from a survey conducted by the Biological Research Information Center (BRIC) after the incident reveals how the academic community perceives questionable research practices18. Researchers identified "quantitative research evaluation metrics" (34%) and "individual researchers' academic dishonesty" (33%) as the primary causes of the issue. Only 14%, which is less than half of either of the top responses, pointed to "internal apathy, indifference, and loss of healthy oversight within academia." Regarding institutions that should take responsibility, "research funding organizations" and "government departments" were the most frequently mentioned (34% and 24%, respectively). In contrast, only 8% believed that individual academic societies, which constitute the fundamental units of the academic community, should be held accountable. Researchers' perceptions influenced reporting by various media outlets, including Newstapa19, and ultimately were deeply reflected in the policy reforms announced by the government in May of this year to conclude the overall responses to the situation20. The approach framed questionable research practice as a problem, identified quantitative research evaluation and lack of research ethics as its causes, and established a causal relationship between them.

According to the causal relationship defined by researchers and the government, questionable research practices would disappear if quantitative evaluation were converted to qualitative evaluation and researchers received ethics training. However, the scope of questionable research practices is too ambiguous to hastily define it as a problem. Even Jeffrey Beall, the professional librarian who greatly contributed to raising awareness about questionable academic publishing, labeled his blacklist as “Potentially, possibly, or probably predatory publishers.” The proposed solutions seem equally hollow. What does qualitative evaluation actually entail? Does limiting the number of papers that can be listed in achievement sections to five while including citation counts and journal impact factors to constitute qualitative assessment? Similarly, research ethics awareness cannot be effectively raised by merely adding new clauses to the list of research misconduct only after media brings attention to them.

I interpret the emergence of questionable research practices, the establishment of quantitative research evaluation as a concrete system, and the lack of research ethics awareness among researchers as phenomena all stemming from the same cause. It is the reality that performance indicators constituting researchers’ self-identity, and these researchers in turn constituting an academia of, by, and for performance indicators. Rather than hastily proposing solutions, I hope researchers and the government will quickly recognize this uncomfortable truth, and diagnose and examine the current state of academia at first.

As a researcher, I left graduate school without significant accomplishments, fearing endless anxiety and pressure about maintaining a livelihood. I attributed the fears that constantly overwhelmed me to my lack of perseverance and talent. However, looking back from outside the university, I realized what I truly feared wasn’t making a living. Rather, I was afraid of becoming a one-dimensional researcher who only maximizes performance metrics within a cage-like academia where everything is legitimized by indicators. I want to find my identity as a researcher through endless interactions with other researchers. I hope my work contributes to the academic community I build together with other scholars, rather than just lengthening my CV or securing another research grant. In this way, I hope academia develops more slowly but more solidly.

- This article was originally written in Korean and first published in Behind Sciences (Sep 2019, Vol. 7), a biannual magazine published by KAIST STP students. The text was carefully translated into English by the author in April 2025, as the views expressed in this piece reflect the author's perspective on academic publishing culture well both at the time of original writing and currently.1 ↩

- I should clarify that the remarks attributed to people mentioned in the article were not formally recorded, so they may differ from what was actually said. However, these are undoubtedly memories that had a significant impact on me, as I still vividly recall the moments I heard them. ↩

- Impact Factor is a journal-level citation metric published annually by Clarivate Analytics for journals listed in their citation indices under the Web of Science platform. It is calculated by dividing the number of times a journal has been cited in the past two years by the number of articles published in that journal during the same period, making it precisely an average citation count over the previous two years. While citation count doesn’t necessarily equal impact, this article uses the original metric name rather translating to prevent confusion. This is how researchers commonly use too. ↩

- The term “SCI-level journals” collectively refers to journals indexed in the four citation indices under Web of Science mentioned in footnote 2: SCI (Science Citation Index), SCIE (SCI Expanded), SSCI (Social Sciences Citation Index), and A&HCI (Arts and Humanities Citation Index). In some cases, journals indexed in Scopus, a citation index operated by the international academic publisher Elsevier, are also included. In South Korea, the National Research Foundation of Korea operates KCI (Korea Citation Index) for domestic journals, which are classified into KCI-listed and KCI-candidate. ↩

- Michael Fire and Carlos Guestrin (2019), “Over-optimization of academic publishing metrics: observing Goodhart’s Law in action”, GigaScience 8 no. 6, giz053. ↩

- Ugo Bardi (2014.8.11), The decline of science: why scientists are publishing too many papers. Link ↩

- Ibid. ↩

- For the Accelerated Academy conference, see http://accelerated.academy. For the articles from the research project, see Link ↩

- For example, see Katia Levecque et al. (2017), “Work organization and mental health problems in PhD students.” Research Policy 46, no. 4, pp. 868–879.; Guardian (2017.8.10), “The human cost of the pressures of postdoctoral research”. ↩

- (Left) Newspaper Enterprise Association (1992), “Berry’s World”; (Right) Nick Mortimer (2014), “Publishing your thesis”, New Zealand Journal of Geology and Geophysics. 57 no. 4, p.355. ↩

- Nature (2019), “Being a PhD student shouldn’t be bad for your health”, Nature 569, no. 307. ↩

- When ongoing research is published first by another researcher or group, this is called “scooped.” ↩

- In late 2011, the Ministry of Education, Science and Technology of Korean government announced plans to abolish the journal listing system and encourage self-evaluation by academia... The decision was ultimately postponed. See the following articles: Dong-A Ilbo (2011.12.08), “Professor ‘Achievement Building’ Journal Registration System Abolished”; Policy Briefing from Korean Government (2013.07.19), “Maintenance and Improvement of Academic Journal Registration System”; Korea University Newspaper (2013.07.23), “Decision to Maintain Journal Registration System is Inevitable.” ↩

- The fact that a performance indicator becomes useless the moment it is selected and applied for performance measurement is known as “Goodhart’s Law” or “Campbell’s Law.” ↩

- Lamont, M. (2009). How professors think: Inside the curious world of academic judgment. Harvard University Press. ↩

- See https://newstapa.org/eng_category?name=FAKE_SCIENCE ↩

- Policy Briefing from Korean Government (2019.5.13), “Ministry of Education and Ministry of Science, Technology and ICT Set Out to Establish Responsible Research Culture in Universities” ↩

- BRIC, Hankyoreh Future & Science (2018.09.10), “Survey on Perception and Response Measures regarding the WASET Predatory Conference Issue” ↩

- For example, Newstapa (2018.8.2), “Quantitative Evaluation for Inflating Performance Led to ‘Fake Academic Conference Disaster’” ↩

- Ministry of Education and Ministry of Science, Technology and ICT (2019.5), “Improvement Plan for Establishing University Research Ethics and Research Management for the Settlement of Honest and Responsible Research Culture” ↩